Nimbiora Landing Zone - Technical Appendix

Table of Contents

- Appendix for Technical Leads

- Purpose

- Audience assumptions

- Architecture summary

- Design principles

- Multi-account roles in detail

- Identity and access model

- Security baseline

- Logging and evidence architecture

- Operations and observability

- Networking model

- Backup and recovery

- Delivery model and repository structure

- Automation model

- Strengths of the current design

- Features

- Delegated Administration Model

- Global Baseline Across Accounts

- Security

- Identity and Access (AWS Identity Center)

- Operations

- Networking

- Backup

- Technical details

- Immutable logging posture

- Identity governance depth

- Network maturity thresholds

- Compliance claims

- Technical FAQs

- Conclusion

Appendix for Technical Leads

Nimbiora Landing Zone v1.0.0 - Last updated: 13/05/2026

Purpose

This appendix is the technical companion to the website overview of the Nimbiora Landing Zone. It is written for CTOs, platform leads, cloud architects, security leads, and senior engineers who want to understand the architectural decisions, control model, operational approach, and implementation boundaries of the landing zone before adopting it in a real AWS environment.

The current design describes a multi-account AWS foundation built around AWS Organizations, Terraform, and Terragrunt, with a clear separation between management, network, security, operations, and workload concerns. It is designed to provide a low-friction starting point for sandbox and early-stage environments while preserving a direct path toward a hardened production-grade landing zone using the same structural model.

Audience assumptions

This appendix assumes the reader is comfortable with AWS core concepts such as accounts, IAM, VPCs, CloudTrail, AWS Config, centralized logging, and infrastructure as code. It explains how those building blocks are assembled in the Nimbiora model, why specific controls are placed in dedicated accounts, and which maturity trade-offs are intentionally made in version 1.0.0.

Architecture summary

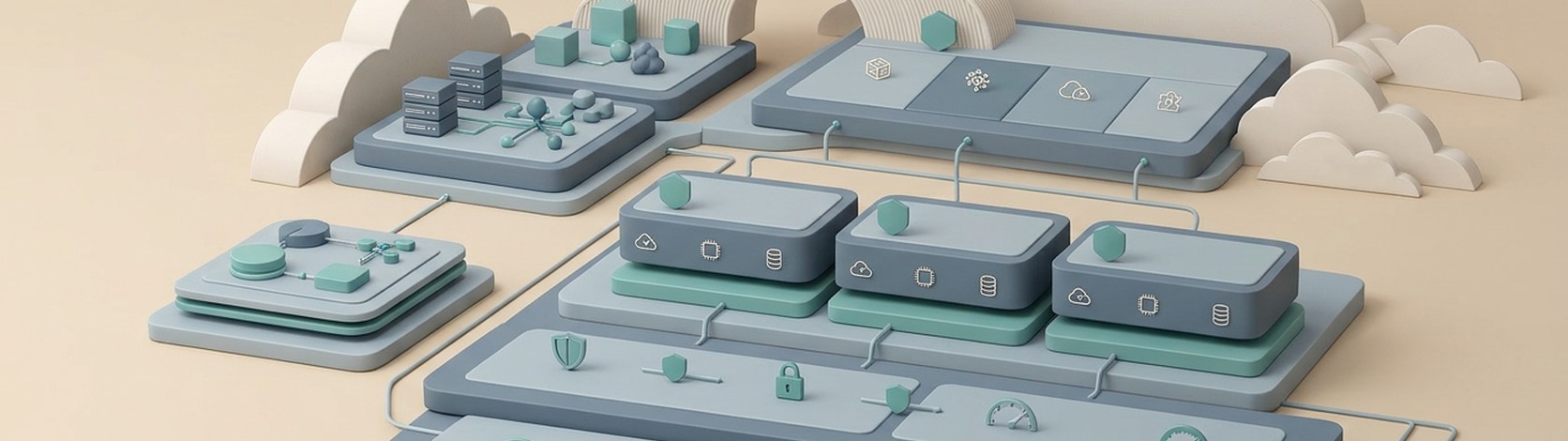

The landing zone implements a five-account baseline topology consisting of Management, Network, Security, Operations, and Sandbox accounts. This account model uses AWS accounts as the primary blast-radius boundary, which means security, billing, administrative ownership, and operational risk are partitioned first at the account level rather than only through tags, naming conventions, or IAM rules.

Account | Primary role | Key responsibilities |

|---|---|---|

Management | Organizational control plane | AWS Organizations root, organizational units, SCPs, account lifecycle, consolidated billing, backup policies. |

Network | Connectivity and IP governance | Shared networking services, VPC connectivity model, Transit Gateway or peering, Client VPN, IPAM, Network Manager. |

Security | Security services and evidence | Delegated administration for Security Hub, GuardDuty, Inspector, Macie; centralized security findings and log storage. |

Operations | Shared operations tooling | Systems Manager, CloudWatch metrics aggregation, Resource Explorer, backup delegation, Terraform backends, Route53 DNS, operational automation. |

Sandbox | Initial workload environment | Safe experimentation, testing, training, proof-of-concept deployments using the same backbone as future workload accounts. |

This topology is intentionally minimal but opinionated. It creates enough separation to implement centralized governance and security controls, while avoiding the overhead of a large enterprise platform from day one.

Design principles

Multi-account governance

The design treats separate AWS accounts as the main containment boundary for privilege, cost, experimentation, and operational ownership. This is a stronger model than running all workloads in one account because it limits the impact of misconfiguration, overly broad permissions, accidental deletions, and budget overruns to a smaller scope.

A practical consequence is that shared platform capabilities are not hosted in workload accounts. Security services, log archives, network control points, and operational tooling are isolated into dedicated accounts so workload administrators cannot easily tamper with foundational controls.

Infrastructure as code ownership

The landing zone is fully codified using Terraform modules orchestrated with Terragrunt, and the codebase is written from scratch rather than assembled from third-party landing-zone modules. This decision favors transparency, reviewability, and long-term ownership over faster initial assembly from external dependencies.

The key architectural split is between reusable shared modules and account-role-specific baseline modules. Shared modules encapsulate common service implementations such as VPCs, GuardDuty, or budgets, while baseline modules compose them into opinionated account baselines for management, network, security, operations, or workloads.

Scalable foundation

Version 1.0.0 is optimized for sandbox, training, and early migration scenarios, not for maximum enterprise complexity on day one. The important point is that the same account structure, IaC workflow, and control patterns can later be hardened and expanded rather than replaced.

Multi-region readiness

The landing zone defines a primary region, a secondary backup region, and support for us-east-1 where some AWS global-service dependencies still exist. The default regional profile is eu-west-1 as primary, eu-central-1 as backup, and us-east-1 for required global-service integrations.

Multi-account roles in detail

Management account

The Management account is the AWS Organizations root account and remains the highest-sensitivity administrative boundary in the platform. It owns organization-wide constructs such as OUs, SCPs, account creation and deletion, consolidated billing, and backup policies, but day-to-day operation of security tooling is intentionally delegated away from it.

That separation matters for two reasons. First, it reduces the number of people and workflows that require access to the most sensitive account in the organization. Second, it avoids turning the management account into a multi-purpose operations hub, which would weaken separation of duties and increase the blast radius of compromise.

Network account

The Network account centralizes connectivity and IP governance services such as Client VPN, VPC peering or Transit Gateway, IPAM, and Network Manager. In this model, the network account becomes the control plane for shared connectivity rather than allowing each workload team to independently build network topology.

The design currently supports a hybrid evolution path. Smaller deployments can start with VPC peering for cost and simplicity, then migrate to a Transit Gateway hub-and-spoke pattern as the number of accounts, VPCs, and routing requirements grows.

Security account

The Security account acts as the delegated administration point for Security Hub, GuardDuty, Inspector, Firewall Manager and Macie and also serves as the central repository for security-relevant logs such as CloudTrail, AWS Config, and VPC Flow Logs. Centralizing both findings and evidence in a dedicated account is one of the strongest design decisions in the document because it preserves evidence integrity and creates a single pane of glass for posture review.

For technical leads, the main implication is operational: workload-account administrators should not own the storage location for organization-wide audit evidence. That separation is essential if the landing zone is expected to support forensic workflows, internal audits, or customer due-diligence requests.

Operations account

The Operations account hosts shared management and observability capabilities such as Systems Manager, CloudWatch metrics aggregation, Resource Explorer, centralized Terraform backends, and delegated AWS Backup administration. This account becomes the operational utility layer of the platform: not the place where workloads run, but the place from which they are monitored, inventoried, and maintained.

This is also the logical place to anchor platform automation, recurring maintenance tasks, and cross-account observability patterns because it avoids overloading either the Management or Security account with day-to-day operational concerns.

Sandbox account

The Sandbox account is the initial workload account and is explicitly intended for experimentation, training, and non-critical testing. Its value is not that it is less structured than production, but that it inherits the same shared backbone so teams can validate patterns in an environment that still reflects the future target architecture.

Identity and access model

The design uses AWS Identity Center as the human access plane and explicitly avoids long-lived IAM users for day-to-day administration. Users obtain short-lived credentials via SSO and assume account-scoped roles defined through permission sets, which is materially better than distributing static access keys across engineers or contractors.

The operational roles include Organization Admin, Operations, Security, Network, Developer, Finance, and Audit. This role taxonomy is a good starting point because it maps responsibilities to platform functions rather than granting a generic administrator role everywhere.

The configuration is also using boundary policies for developer roles to reduce privilege-escalation risk. For production-grade evolution, this should be extended into a broader permission-governance model combining permission sets, permissions boundaries where appropriate, and SCPs at OU or account level so developers, operators, and auditors each receive only the subset of actions they require.

Security baseline

The platform is designed to achieve greater than 95 percent alignment against CIS AWS Foundations Benchmark v5, AWS Foundational Security Best Practices v1.0.0, and NIST SP 800-53 Rev. 5 through a combination of centralized services and per-account defaults.

Detective services

The Security account centrally manages GuardDuty, Inspector, Macie, and Security Hub. In practical terms, this gives the platform native AWS telemetry for threat detection, vulnerability management, sensitive-data discovery, and compliance posture aggregation.

Configuration and audit evidence

AWS Config is enabled in every account and region, CloudTrail and other audit logs are retained centrally, and DNS query logs plus VPC Flow Logs are exported to centralized storage. This combination is significant because it supports both compliance reporting and root-cause analysis: Config answers what changed, CloudTrail answers who did it, and network or DNS logs can help explain how traffic or name resolution behaved during an event.

Account hardening defaults

The global baseline includes default VPC cleanup, EBS encryption by default, S3 Public Access Block, IAM password-policy hardening, IAM Access Analyzer, VPC Block Public Access posture, alternate contacts, baseline budgets, and account aliases. These controls are requested by the security benchmarks, and they remove many of the common insecure defaults and operational blind spots that appear in unmanaged AWS accounts.

Guardrails

We included an expandable catalog of mandatory guardrails implemented as SCPs. From a technical-lead perspective, this is an important area to expand over time because SCPs are the strongest preventive control available at organization level for restricting entire classes of actions regardless of what account-level IAM policies allow.

Logging and evidence architecture

The document makes the right architectural call by centralizing security-critical logs in the Security account rather than storing them in workload accounts. This materially improves tamper resistance because a workload administrator should not be able to delete, overwrite, or reconfigure the organization’s main evidence archive for that account.

The named log categories include CloudTrail, Config, DNS logs, VPC Flow Logs, GuardDuty findings, and Macie findings, with retention controls and Athena-based analytics over centralized S3 storage. That design supports both periodic review and ad hoc investigation, and it is a strong fit for security operations that need low-friction query access without first building a separate SIEM pipeline.

Operations and observability

The operations baseline relies on AWS Systems Manager for host inventory, patching foundations, and fleet-management prerequisites. It also includes CloudWatch metrics centralization, Resource Explorer for organization-wide discovery, cost anomaly detection, monthly budgets, and scheduling for non-production EC2 shutdown windows.

This operational profile is sensible for a landing zone because it solves the first-order visibility problems early: what exists, which resources are running, which accounts spend money unexpectedly, and how basic patch or agent controls are anchored. It also avoids the common anti-pattern where observability is treated as a workload concern rather than a platform capability.

Networking model

The networking section describes an intentionally staged approach. The low-complexity starting point is central-VPC connectivity with VPC peering, while the target scalable model is Transit Gateway with shared connectivity, route propagation, and hub-and-spoke governance.

The document also includes centralized IPAM, cross-account RAM sharing of address pools, VPC provisioning patterns, private and public subnet strategy, NAT configuration, VPC endpoints, DNS support, flow logging, and Client VPN lifecycle as code. For technical leads, the most important takeaway is that networking is treated as a governed shared service, not something every account independently improvises.

Backup and recovery

Backup is delegated to the Operations account and implemented through organization-level, tag-driven policy classes such as critical cross-region, critical same-region, standard, and archive. This is a strong pattern because it decouples backup intent from per-resource manual setup and makes policy assignment consistent across accounts.

The design also implements central vault copies, vault lock, regional copies, and retention controls. That combination is particularly relevant for ransomware resilience and regulated workloads because it helps ensure that backup copies are not only created but also protected against casual deletion or tampering.

Delivery model and repository structure

The implementation relies on Terragrunt’s cascading configuration model to reduce duplication across accounts and regions, while keeping customer-specific identifiers and feature toggles in a small set of HCL files. The repository is organized around reusable module code, global account-scope configuration, and regional folders for region-bound resources such as backup definitions.

Terraform and Terragrunt run inside Docker containers with pinned tool versions, plugin caching, S3 state backends, and DynamoDB locking. This is a good engineering choice because it reduces workstation drift and makes execution environments more deterministic across engineers and CI jobs.

Why Terragrunt matters here

For technical leads evaluating maintainability, Terragrunt is doing three jobs in this design: hierarchical configuration inheritance, orchestration of repeated module patterns across many accounts and regions, and reduction of copy-paste overhead. The real benefit is not just less duplication, but lower risk of silent divergence between supposedly identical account baselines.

Automation model

The supporting automation includes multi-account orchestration scripts, AWS SSO profile generation, SSO credential refresh, and infrastructure drift detection. These supporting scripts matter more than they first appear because they directly affect day-two platform usability: without them, a well-designed landing zone often becomes painful to operate across multiple accounts. Scripts can optionally run inside a docker container for greater portability in Windows and Mac environments.

Strengths of the current design

The current document has several clear strengths for a v1.0.0 landing zone intended to support sandbox adoption and later production hardening.

- Clear separation of concerns across five accounts rather than collapsing everything into a single administrative boundary.

- Strong emphasis on centralized security services and centralized evidence storage.

- Good early adoption of AWS-native detective and posture services such as Security Hub, GuardDuty, Inspector, Macie, and AWS Config.

- Practical operational baseline including Systems Manager, Resource Explorer, cost controls, and centralized metrics.

- A credible path from low-cost sandbox to production-grade maturity without throwing away the repository structure or account model.

- Full infrastructure-as-code ownership with reviewability and reproducibility built into the platform model.

Features

Terraform/Terragrunt Architecture

The entire landing zone is codified in Terraform modules with Terragrunt for DRY orchestration.

The landing zone separates two concerns:

- Shared modules — reusable AWS service configurations (GuardDuty, VPC, Budget, etc.) consumed by many accounts

- Baseline modules — account-type-specific compositions that wire shared modules together for a given account role (management, security, operations, network)

Property | Benefit |

|---|---|

Reproducible | Identical environments every time — no snowflake accounts |

Auditable | Every change goes through Git — full history of who changed what and when |

Reviewable | Pull request workflow enables peer review before infrastructure changes reach production |

Testable | plan outputs show exactly what will change before apply |

Modular | Add new accounts, regions, or services by composing existing modules |

Documented by default | Variables have descriptions; modules have defined inputs and outputs |

All customer-specific values (account IDs, ARNs, feature toggles, OU IDs) live in a small set of HCL configuration files.

Dockerized Terraform

All Terraform/Terragrunt commands run inside Docker containers for consistency and reproducibility. Docker containers are isolated with:

- Exact tool versions (Terraform, Terragrunt, TFLint)

- Mounted AWS credentials and SSH keys

- Plugin caching to speed up repeated runs

- Enforced consistency across workstations

- Per-module state files in S3 (each module has isolated state)

- DynamoDB locking prevents concurrent modifications

Folder Hierarchy

Your infrastructure uses a cascading configuration model that eliminates code duplication.

Each level includes shared settings for the levels above.

The Module folder contains the shared terraform code used across multiple accounts.

Every account has a Global folder that includes all the resources and settings that should be created only once or settings that are not regional in AWS. The regional folders include resources that have a regional scope. The architecture handles complex multi-account AWS infrastructure while maintaining simplicity and preventing common mistakes like deploying to wrong accounts or inconsistent tagging.

Automation Scripts

The landing zone code is shipped with scripts to automate deployment or maintenance tasks. The script can run natively in a Linux environment or using a Docker container for compatibility across OS.

Multi-Account Orchestration

Execute commands across multiple accounts.

AWS SSO Profile Generation

Auto-generates AWS CLI profiles for each account.

SSO Credential Refresh

Login once and export temporary credentials to all accounts.

Infrastructure Drift Detection

Detects manual changes or broken code across the entire landing zone infrastructure.

Delegated Administration Model

Our architecture leverages a delegated administration model where services are activated at the Organization level but managed by specialized platform accounts. This approach covers critical areas such as security monitoring, vulnerability management, network governance, and backup administration. By shifting responsibility from the management account to dedicated units, the landing zone enforces least-privilege operational ownership and prevents administrative bottlenecks.

Global Baseline Across Accounts

The configuration of every account is standardized so features are applied consistently.

Baseline configuration include:

- AWS Config recording and centralized delivery.

- DNS query logging to central storage.

- Athena workgroup setup.

- Monthly budgeting.

- Default VPC cleanup.

- EBS encryption by default.

- S3 public access blocking at account level.

- IAM password policy hardening.

- IAM Access Analyzer enablement.

- VPC Block Public Access policy mode.

- Systems Manager inventory role/configuration.

- Cloudwatch metrics centralised in the operations account.

- Configure default alternate contacts for billing, security and operations.

- Account alias

Security

- AWS GuardDuty for threat Detection with configurable options:

- S3

- EC2 runtime

- RDS

- EC2 malware

- EKS runtime

- Lambda

- EKS audit logs

- AWS Macie for Data Security with automatic enrollment and central findings export.

- AWS Inspector for Vulnerability Management for EC2, ECR, and Lambda.

- AWS Config recorder enabled in every account and region.

- AWS Route53 DNS query logs sent to Cloudwatch.

- AWS Route53 Resolver query logs centralized to an S3 bucket.

- AWS Security Hub CSPM for Cloud Security Posture with standards-based control policies:

- CIS AWS Foundations Benchmark v5.0.0

- AWS Foundational Security Best Practices v1.0.0

- NIST Special Publication 800-53 Revision 5

- Audit logs retention on centralized S3 buckets with configurable expiration for:

- CloudTrail

- Config

- DNS logs

- VPC flow logs

- GuardDuty findings

- Macie findings

- Security log analytics capability with centralized query access via Athena.

- An expandable catalog of essential mandatory Guardrails implemented as Service Control Policies

- Default VPC deleted in every enabled region.

- EBS Encryption by default enabled in every account and region.

- S3 Public Access Block enabled by default in every account.

- IAM password policy hardening: enforces stronger passwords and reuse controls.

- IAM Access Analyzer enabled in every account and region.

- VPC Block Public Access posture: applies a default public-access prevention model for network resources.

Identity and Access (AWS Identity Center)

- Central user and group management with Identity Center: it generates short-lived, role-scoped credentials on demand, there are no long-lived IAM users.

- Permission sets for operational roles: Organization Admin, Operations, Security, Network, Developer, Finance, Audit.

- Boundary policies to mitigate the Privilege Escalation risk for the Developer role.

Operations

- AWS Systems Manager : creates the baseline needed for host inventory, patching, and fleet management.

- Automated SSM agent management and optional CloudWatch agent settings.

- Resource Scheduler for non-production EC2 instances, with start/stop windows using iCal schedules and tag targeting.

- Central CloudWatch metrics sink: the operations account receives metrics (optionally logs and traces) from all source accounts.

- AWS Backup delegated account.

- Budget Anomaly Detection at the organization level.

- Central Terraform Backends, isolated and regularly backed up for extra security.

- Resource Explorer with organization-wide aggregator index: any resource in any account, in any enabled region, is discoverable from one search interface.

- DNS and Hosted Zone Delegation

- Central root hosted zone model for landing-zone namespace.

- Per-account delegated hosted zones.

- Automated parent-child NS delegation pattern.

- Cost Governance:

- Baseline monthly budgets across accounts with override capability.

- Cost anomaly detection in the management account.

- Scheduler-based non-prod compute savings, turning off instances outside office hours if tagged instance_scheduler_schedule = office-hours.

Networking

- Centralized IPAM architecture for private IP management and automated deployment of new VPCs.

- Optional Transit Gateway controls for hub-and-spoke routing, DNS support, attachment defaults:

- All workload VPCs attach to the TGW via cross-account RAM sharing

- Cross-account attachments are auto-accepted — no manual approval workflow

- Routes are auto-propagated to the default route table

- IPAM:

- Top-level private pool managed from the network account

- Regional sub-pools shared to the entire organization via RAM

- Workload account VPCs draw CIDR blocks from the shared pool automatically

- No manual coordination required when provisioning new VPCs

- VPC provisioning framework with:

- IPAM-based CIDR allocation

- Subnet strategy (public/private)

- NAT configuration

- VPC endpoints creation (interface/gateway)

- DNS support/hostnames

- VPC Flow logs to central storage

- TGW attachment and route propagation controls

- VPC peering request/accept workflows

- VPC BPA posture and exclusions

- Client VPN Platform

- Client VPN endpoint lifecycle managed as code.

- Certificate-driven server auth with DNS validation support.

- SAML-based federated authentication model (Identity Center).

- Log retention controls.

- Operational cost control supported by subnet-association management (pause/resume pattern).

Backup

- Organization-wide Backup service, delegated to the Operations account.

- Tag-driven organization backup policy with multiple plan classes:

- Critical cross-region: first copy in the source account, copy to a central Vault with Lock in the Operations account (main region), copy to a secondary region in the Operations account.

- Critical same-region (for services without cross-region copy support)

- Standard: backup in the source account, copy to a central vault in the Operations account (main region).

- Archive: single copy in the source account.

- Resource-type opt-in policy for regional backup support.

- Critical vault lock policy support (including governance mode and retention controls).

Technical details

Preventive controls

The default catalog defines organization‑level preventive guardrails implemented as Service Control Policies (SCPs) and attached at the root (“ROOT” target).

At a high level, this set does three things:

- Protects organizational integrity (no one can detach a member account from the org).

- Protects core security and audit services (CloudTrail, Config, Security Hub, GuardDuty, Macie, Access Analyzer) from being disabled or materially weakened.

- Reduces high‑impact misuses of root and account‑wide IAM (root actions, root access keys, password policy weakening).

You should think of these as “do not touch” rails: they do not grant permissions, they remove the ability to turn off or escape the central governance plane, even for over‑privileged principals in member accounts.

deny-leave-organization

Prevent member accounts from leaving the AWS Organization.

- What it blocks

Any attempt by a member account to call organizations:LeaveOrganization, regardless of who initiates it inside that account.

- Why it matters

Without this, an administrator in a member account with Organizations permissions could detach the account from the org, instantly escaping SCPs, central billing, and delegated admins. That breaks your entire governance model for that account. - Design implication

This makes the management account the only place from which account lifecycle and org membership can be controlled. It is a foundational guardrail for any multi‑account landing zone.

deny-cloudtrail-configuration-changes

Prevent disabling, deleting, or changing organization CloudTrail settings, but only for organization trails.

- What it blocks

cloudtrail:DeleteTrail, StopLogging, UpdateTrail, PutEventSelectors, PutInsightSelectors conditioned on cloudtrail:IsOrganizationTrail = true. - Why the condition is important

The condition ensures only org‑level trails are protected. It still allows workloads to manage local, non‑org trails if they have a valid need, but nobody in a member account can switch off or reconfigure the organization trail that feeds your central audit log. - Design implication

This is your “immutable audit pipeline” guardrail for CloudTrail. Even if someone gets AdministratorAccess in a member account, they cannot neuter the org trail.

deny-config-recorder-changes

Prevent disabling or deleting AWS Config recorder and delivery channel.

- What it blocks

config:DeleteConfigurationRecorder, StopConfigurationRecorder, and DeleteDeliveryChannel everywhere in scope. - Why it matters

AWS Config is your configuration change ledger. If someone can turn off the recorder or delete the delivery channel, you lose your historical view of what changed, when, and by whom. - Design implication

This makes AWS Config always‑on in governed accounts. You still control where and how it delivers, but no local admin can switch it off to “clean up” evidence.

deny-securityhub-disable

Prevent disabling Security Hub and disassociating members from central governance.

- What it blocks

securityhub:DisableSecurityHub, DisassociateFromAdministratorAccount, and DeleteFindingAggregator.

- Why it matters

Security Hub is your CSPM and control‑status view. If a member account can disable or disassociate, you lose centralized visibility and compliance scoring for that account. - Design implication

Ensures that once an account is enrolled in centrally managed Security Hub, it stays enrolled. A compromised account cannot hide by dropping out of aggregated findings.

deny-guardduty-disable

Prevent disabling GuardDuty and disassociating from delegated administration.

- What it blocks

guardduty:DeleteDetector, DisassociateFromAdministratorAccount, StopMonitoringMembers. - Why it matters

GuardDuty is your threat‑detection signal. Deleting detectors or stopping monitoring is the classic “turn off the alarm” move after compromise. - Design implication

With this guardrail, GuardDuty remains active in all governed accounts, and the delegated admin keeps visibility into findings even if the local account is hostile.

deny-macie-disable

Prevent disabling Amazon Macie and disassociating from delegated administration.

- What it blocks

macie2:DisableMacie, DisassociateFromAdministratorAccount. - Why it matters

Macie covers sensitive‑data discovery and data exposure. A data‑exfiltrating actor would like to turn it off or remove delegation to avoid detection. - Design implication

Keeps Macie consistently enabled and centrally governed wherever you decide to use it, which is important for data‑protection and regulatory stories.

deny-access-analyzer-disable

Prevent deleting IAM Access Analyzer analyzers used for governance.

- What it blocks

access-analyzer:DeleteAnalyzer globally in scope. - Why it matters

IAM Access Analyzer is your cross‑account / public‑access exposure detector. Deleting analyzers removes an important control used to identify overly broad resource policies. - Design implication

Ensures that any analyzer you deploy for governance cannot be removed by a local admin trying to hide newly created public or cross‑account exposures.

deny-iam-account-password-policy-changes

Prevent weakening or deleting the account password policy.

- What it blocks

iam:DeleteAccountPasswordPolicy, iam:UpdateAccountPasswordPolicy in all member accounts. - Why it matters

The account password policy is a global control for IAM console passwords. If teams can loosen it, you end up with weak credentials and inconsistent standards. - Design implication

Forces password‑policy lifecycle back to central platform ownership. Changes to complexity, rotation, or reuse must be done where SCPs permit (typically management/security), not per account.

deny-root-actions

Deny all root user actions in member accounts (SCPs cannot restrict management account root).

- What it blocks

Any action (Action = "*") when aws:PrincipalArn matches arn:aws:iam::*:root, across all governed member accounts. - Why it matters

The account root user is effectively omnipotent. The best practice is “never use root except for a handful of administrative tasks”, but in reality people sometimes log in as root during incidents or migrations, which is risky. - Design implication

This SCP effectively turns off root for day‑to‑day operations in member accounts. Root can still exist, but cannot perform actions while the SCP applies. This significantly reduces the risk that someone falls back to root and bypasses all your IAM controls.

Note: SCPs cannot restrict the management‑account root, so this applies to member accounts only.

deny-root-access-key-creation

Prevent creation of root user access keys across governed scope.

- What it blocks

iam:CreateAccessKey when aws:PrincipalArn is an account root (arn:aws:iam::*:root). - Why it matters

Root access keys are among the most dangerous possible credentials: long‑lived, all‑powerful, and hard to contain once leaked. They also bypass almost all normal guardrails. - Design implication

Even if someone does log in as root, they cannot mint a new root access key. Combined with the previous guardrail, this helps enforce the principle of “no root keys, no root usage” in all member accounts.

Immutable logging posture

The landing zone uses an AWS Organizations multi‑region trail that is owned by a central account and visible as an organization trail in member accounts.

- Log file validation is enabled so CloudTrail produces signed digest files that make log tampering detectable; any alteration of stored events can be verified using the built‑in validation tools.

- SCPs prevent disabling, deleting, or reconfiguring organization trails (StopLogging, DeleteTrail, UpdateTrail, and selector changes limited by cloudtrail:IsOrganizationTrail), so a compromised workload account cannot silently switch off the org‑level pipeline

To add tamper resistance, the design supports enabling AWS Backup for all critical audit buckets and continuously copying those logs into a backup vault with a compliance lock.

When Vault Lock is enabled in compliance mode with an appropriate retention period, recovery points in that vault cannot be deleted or have their retention shortened, even by IAM administrators.

This turns the backup vault into the immutable evidence store: even if someone gained admin access to the logging account and tampered with or deleted the primary log objects, the locked backups would still exist for the configured retention window.

Identity governance depth

The landing zone uses AWS Identity Center as the only entry point for human access, with short‑lived, role‑based credentials and no long‑lived IAM users for day‑to‑day work.

- Permission sets: mapped to teams and responsibilities

- Permission sets are team / job‑specific, not environment‑generic:

- Developer roles are scoped only to workload accounts (for example, sandbox and future prod workload OUs), and do not have access to the platform control accounts (Network, Security, Operations, Management).

- Operations, Security, and Network roles are granted access to the relevant platform accounts and, where required, to selected workload accounts, reflecting their cross‑cutting responsibilities.

- Finance / billing and Audit roles are limited to the minimum set of accounts and services needed to perform billing analysis and read‑only compliance checks.

Developers receive a power‑user style permission set in workload accounts so they can:

- Create and manage application resources

- Create IAM roles needed for their applications

- Iterate quickly in their own accounts without constant platform‑team intervention

To prevent this from turning into full platform ownership, a permissions boundary is attached to the developer role:

- The boundary explicitly blocks high‑risk actions, such as:

- Creating IAM users

- Escalating their own privileges or bypassing SSO

- Modifying shared platform constructs such as networking, security services, or org‑wide logs

- As a result, developers can create roles and policies for their applications only within the guardrails defined by the boundary. If a policy would grant more privilege than the boundary allows, it is automatically rejected.

Network maturity thresholds

The landing zone supports two connectivity models and makes the trade‑off explicit.

When VPC peering is enough

For early‑stage environments, VPC peering is used as a zero‑cost, low‑complexity solution:

- Scope: a small number of workload accounts such as sandbox, dev, staging, prod.

- Topology: each workload VPC is peered to:

- a central network VPC (for Client VPN and shared ingress/egress), and

- a central operations VPC (for centralized observability and shared tooling).

- Characteristics:

- Simple routing: essentially hub‑and‑spoke with one or two hubs.

- No need for transitive routing or complex east‑west segmentation.

- Operational overhead and cost remain low; there is no managed data‑plane component to pay for or operate.

In this model, peering provides enough connectivity for:

- Engineers to reach workloads over the Client VPN.

- Central monitoring and operations tools to reach resources in each workload VPC.

As long as the organization stays within this small, hub‑and‑spoke footprint, peering is appropriate and cost‑effective.

When to move to Transit Gateway

As the environment grows, a full mesh or large multi‑account topology quickly makes peering hard to manage (N×N connections, complex routing, and no transitive routes). At that point, the platform should transition to AWS Transit Gateway (TGW).

Trigger conditions typically include one or more of:

- Account / VPC scale

- More than a handful of workload accounts, or multiple VPCs per account, where managing individual peering links and route tables becomes error‑prone.

- Routing complexity

- Need for transitive connectivity (workload‑to‑workload via a shared hub, not just workload‑to‑network/operations).

- Need for more explicit east‑west segmentation between different environments, tenants, or business units.

- Hybrid connectivity

- Integration with on‑premises networks or other clouds, where TGW becomes the natural hub for VPNs or Direct Connect attachments.

- Governance and standardization

- Desire to express routing policy and segmentation once, in a central place, rather than duplicating rules across many VPC route tables.

In that phase, TGW becomes the authoritative hub:

- All workload, network, and shared‑services VPCs attach to the Transit Gateway.

- Routing and segmentation policies are defined on the TGW route tables, rather than through a mesh of individual peering links.

- The peering‑based pattern is retired or kept only for very small, isolated scenarios.

Compliance claims

The landing zone is designed to ship with a high baseline alignment to three AWS native standards:

- CIS AWS Foundations Benchmark v5

- AWS Foundational Security Best Practices v1.0.0

- NIST SP 800‑53 Rev. 5 (as implemented through Security Hub)

In an empty landing zone (no application workloads deployed), with only the core platform services enabled, the combined Security Hub scores for these three standards exceed 95%, after deliberately disabling a small set of controls that do not match the intended operating model.

How the baseline is measured

- The score refers specifically to Security Hub control coverage in the landing zone accounts, with the controls listed below disabled by design.

- “>95%” means that, for each benchmark, at least 95% of the enabled controls are in a “passed” or “not applicable” state in a freshly deployed, empty landing zone.

- Workload‑specific controls that require application resources (for example, specific logging behaviors inside application S3 buckets) are expected to be addressed by workload teams using the same governance model; they are outside the scope of the platform‑only baseline.

Intentionally disabled controls

The following Security Hub controls are disabled at the platform level with the explicit rationale “Aligned to internal security” (or operational constraints), because they either do not match the intended operating model or are better handled through internal processes:

- IAM.6 – Hardware MFA for root user

The root user is not used for day‑to‑day operations in member accounts, and root credentials are tightly controlled. Enforcing hardware MFA for every root user in every account is treated as an internal policy decision, not a landing‑zone baseline requirement. - IAM.18 – Dedicated support role for AWS Support

Use and structure of support roles is defined by internal incident‑management processes. The platform does not enforce a specific support‑role pattern globally. - S3.20 – MFA delete on general‑purpose buckets

MFA delete is not enabled by default because it complicates automation and lifecycle management for general‑purpose buckets. Strong controls are instead provided through central logging, SCPs, and backup / retention patterns. - S3.22 / S3.23 – Object‑level read/write logging on general‑purpose buckets

Object‑level logging for every general‑purpose bucket is not enforced globally as it has significant cost and noise implications. Where required, it can be enabled for specific data‑classified workloads as part of application‑level security design. - CloudFormation.3 – Termination protection on CloudFormation stacks

Global enforcement of termination protection does not align with the platform’s automation model and lifecycle expectations for certain stacks. It is applied selectively where appropriate. - CloudFormation.4 – Service roles associated with CloudFormation stacks

The use of explicit service roles for every stack is treated as an implementation detail of specific pipelines and modules, not a universal hard requirement at the landing‑zone layer. - CloudWatch.16 – Minimum 365‑day retention for log groups

A hard minimum of 365 days across all log groups is too rigid and costly, and cannot be adapted per environment or workload; retention is instead managed by policy and internal requirements. - S3.15 – Object Lock on general‑purpose buckets

S3 Object Lock is not enabled by default on all general‑purpose buckets. Immutability for audit data is provided at the backup layer via AWS Backup vaults with compliance‑mode Vault Lock, rather than by locking every primary bucket.

Technical FAQs

Does the landing zone support delegated administration?

Yes. The design explicitly delegates administration of core security and operational services away from the Management account into specialized platform accounts, especially Security and Operations.

Is this using Control Tower?

No. The document describes a custom Terraform and Terragrunt landing zone for AWS Organizations rather than a Control Tower implementation. It follows many of the same architectural goals, but ownership and extensibility are centered on the Terraform codebase rather than on an AWS-managed landing-zone opinion.

Can the sandbox evolve into production?

Yes. The design intent is to start with a safe, low-cost sandbox baseline and then harden the same structural model into a production-grade landing zone without replacing the account topology or repository layout.

Is the platform opinionated?

Yes. It is intentionally opinionated in account layout, centralization strategy, service selection, and automation approach. That opinionation is a feature, not a limitation, because it reduces ambiguity and accelerates implementation decisions for teams that do not want to invent a landing zone from first principles.

Conclusion

The Nimbiora Landing Zone v1.0.0 presents a solid technical foundation for a custom AWS multi-account platform centered on strong account separation, centralized security services, centralized evidence, infrastructure as code, and a practical migration path from sandbox to production. Its strongest characteristics are architectural clarity and a bias toward long-term maintainability rather than quick, opaque assembly.